As I have explained previously, there were some problems with my paw detection. As SO-user points out:

Seems like you would have to turn away from the row/column algorithm as you’re limiting useful information.

Well, I realized I was limiting my options by purely looking at rows and columns. But when you look at the first data I loaded:

You might understand why that approach worked perfectly well. All paws are spatially and temporally separated, so there’s no need for a more complicated approach. However, when I started loading up other measurements, it became clear this wasn’t going to cut it:

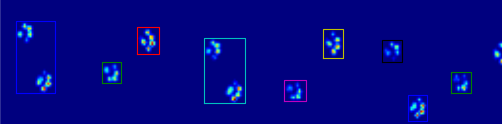

Which made me turn to Stack Overflow again to get help with recognizing each separate paws. Thanks to Joe Kington I had a good working answer within 12 hours and he even added some cool animated GIFs to show off the results:

As you can see it will draw a rectangle around each area where the sensor values are above a certain threshold (set to a ridiculously low 0.0001 does a great job). The data first get’s smoothed though, to make sure there’s less dead areas within each paw. Then it starts filling up the contact completely, since it will label the filled sensors and cut those out and return the slice that contains all the connected filled sensors.

def find_paws(data, smooth_radius=5, threshold=0.0001):

data = sp.ndimage.uniform_filter(data, smooth_radius)

thresh = data > threshold

filled = sp.ndimage.morphology.binary_fill_holes(thresh)

coded_paws, num_paws = sp.ndimage.label(filled)

data_slices = sp.ndimage.find_objects(coded_paws)

return object_slices

He also creates four rectangles that will move to the position of each paw, which helps tremendously with deciding which paw corresponds with which contact, as we’ll only have to decide what the first rectangle is and start sorting from there.

Sadly, I haven’t had time to try this out on various of the nasty measurements (i.e. where the paws overlap very closely and the smoothing might make them interconnect). I’m also curious about the performance of the solution, as I’m not sure how it knows to stop looking when there’s no data anyway (like when the dog walked over the plate within 50% of the frames). Anway, I’ll update this post as soon as I have more results of my own!